Protobuf/Sparkplug (Beta)

Protobuf/Sparkplug messages work via the general MQTT architecture. Specially designed functions help to integrate them into the regular MQTT message stream.

Protobuf and Sparkplug are two technologies that work together within the context of MQTT. Protobuf is a binary serialization protocol developed by Google, while Sparkplug is an open-source project to define a standard set of payloads for use with MQTT. Together, they allow applications to send data over networks efficiently in a compact format. Protobuf encodes messages into binary form before sending them across the network, which reduces bandwidth usage and latency compared to other formats, such as XML or JSON. Meanwhile, Sparkplug provides additional features like message type identification and versioning so that receivers can easily interpret incoming messages without needing any prior knowledge about their contents or structure. Both technologies are useful in IoT applications due to their efficiency and ease-of-use; they enable devices from different vendors/manufacturers to communicate with each other using common protocols regardless of platform differences

MQTT Connection

As a basis a MQTT connection to a broker is needed. Find out here how to define the details.

Subscription

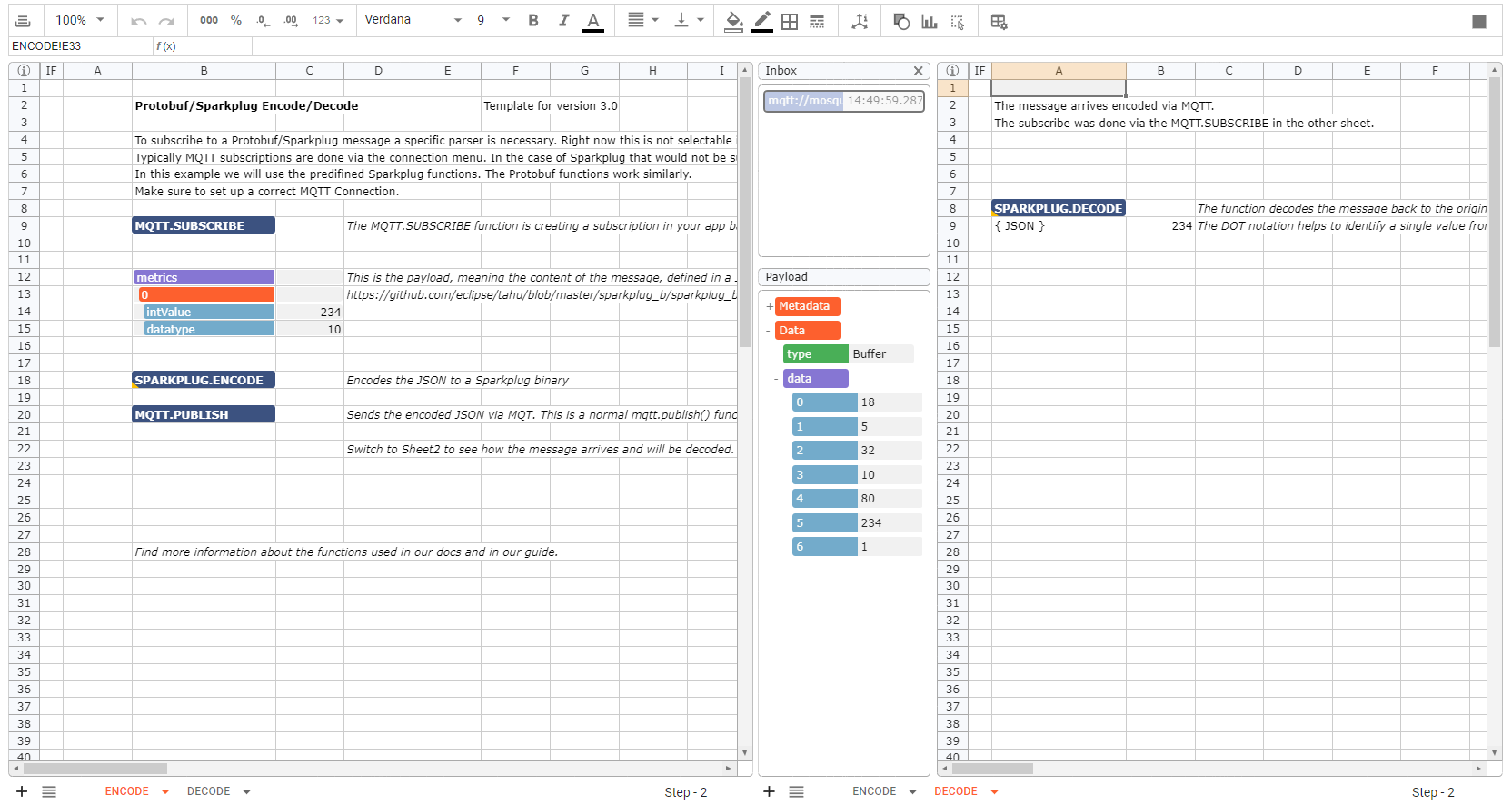

Protobuf/Sparkplug messages are binary encoded. The inbox only supports "regular" data formats like JSON or strings. To be able to work with binaries, a certain "DataType" setting is necessary. This is not yet configurable via the subscription menu in the connection. Use the MQTT.SUBSCRIBE() function instead. The MQTT.SUBSCRIBE function is creating a subscription in your app based on a defined connection to a specific Inbox. We trigger this function first, to make sure that the Inbox in Sheet2 will be configured to the "binary" payload before sending one via a MQTT.PUBLISH.

=MQTT.SUBSCRIBE(MQTT_Connection!,"topic",INBOX(Sheet2!),,,,,,"binary")

The data type parameter set to "binary" allows an inbox to interpret the incoming binary message. Make sure this function is triggered once, before the message you want to receive arrives in your inbox.

Sparkplug Functions

To work with Sparkplug messages in Streamsheets you can either en- or decode a message.

A message to be en- or decoded always has to be structured as defined in the Sparkplug specifications. The payload can have five different fields described here ("timestamp", "metrics", "seq", "uuid" and "body").

For encoding create the needed JSON structure resembling your data and reference that in the message parameter. The encoded data appears in the set target cell or in the function cell itself. Now use a MQTT.PUBLISH() function to send the data, by referencing the encoded payload as the MQTT payload.

For decoding make sure, that you have subscribed to the topic the binary/sparkplug message is send to, as described in Subscription. The decoding function has to reference the "data" array from the inbox in the message parameter. The fastest way to do so is to use the DOT notation: INBOXDATA.data

e.g. =SPARKPLUG.DECODE(INBOXDATA.data,A9)

A9 in this example now shows the original JSON of the Sparkplug message.

Protobuf Functions

Protobuf functions work similar to the Sparkplug ones, but require some further clarification. The main difference is, that Protobuf messages can have different underlying Protobuf files. While Sparkplug always uses the same. To address this difference, the Protobuf functions have two further parameters.

- MessageType: Sets a certain message type set within a Protobuf file

- Protobuf file: Structures the content of a Protobuf message. Both sender and receiver of the Protobuf message have to use the same file for to make encoding and decoding work.

Mount a .proto file

The Protobuf file has to be manually placed in your system files so Streamsheets can read it. This path has to be mounted to your installation within the docker-compose.yml of your installation. If you are a hosted customer please contact support, so they can arrange this configuration for you.

To add a mount go into your .yml file and find the "volumes" section under in the "streamsheets" configuration. There you add a statement like the following:

- ./sparkplug_b.proto:/protobuf/sparkplug_b.proto

This maps your operating system files to your docker files. The ":" in the middle separates the two paths. The left path is the one from your operating system and the right one is within docker. This setting will search for a file called: "sparkplug_b.proto" on the same level as your docker-compose.yml file and mount it into the docker path on the right. Now restart your installation and use the docker path in your functions to tell Streamsheets where to look for it.

- =PROTOBUF.ENCODE(B12:C15,"Payload","/protobuf/sparkplug_b.proto")

- =PROTOBUF.DECODE(INBOXDATA.data,"Payload","/protobuf/sparkplug_b.proto",A9)